Kling 3.0 vs Kling 3.0 Omni: Which Model Is Better for AI Video Generation?

Understanding the Distinctions!

Released in February 2026, the Kling 3.0 model series introduced a new phase in the Kling video generation ecosystem. In the same model series, Kuaishou released Kling Video 3.0 and Kling Video 3.0 Omni.

Kling 3.0 Series Release and Model Overview

Kling 3.0 evolves from the Kling 2.6 model and focuses on improved temporal stability, multi-shot control, and structured scene generation. Kling 3.0 Omni, by contrast, is built on the Kling O1 architecture and introduces support for video reference input, enabling users to guide generation using an existing video.

While these models originate from different architectural branches, their supported API workflows currently overlap. At the time of writing, video reference input is not supported via API. This means that for standard generation workflows such as:

1. Text-to-Video (Single Shot)

2. Text-to-Video (Multi Shot)

3. Text-to-Video Single Shot

both Kling 3 and Kling 3 Omni operate under comparable input constraints.

In theory, this suggests that output behavior for standard video generation should be similar. In this comparison, we evaluate Kling 3.0 and Kling 3.0 Omni under identical generation conditions to determine whether measurable differences in video quality emerge when video reference is not used.

Final Verdict!

Kling 3.0 and Kling 3.0 Omni perform similarly in standard text-to-video generation, producing comparable visual quality and motion across most prompts. The key distinction emerges when reference images are involved: Kling 3.0 Omni handles reference-guided generation more reliably, maintaining stronger consistency with the provided input. For general T2V workflows either model performs well, but for projects that rely on image references, Kling 3.0 Omni is the more dependable choice.

Kling Video API: What Is Actually Different?

Both models support:

1. Text-to-Video (T2V)

2. Image-to-Video (I2V)

3. Multi-shot generation

4. Multi-character coreference

5. Native audio generation

6. Flexible duration settings

The major distinction lies in Kling 3.0 Omni supporting video reference input capability. For all other workflows, the models are expected to behave similarly.

Hence, this comparison focus entirely on output behaviour, and not the feature set.

Evaluation: How We Evaluate Kling 3.0 API vs Kling 3.0 Omni API

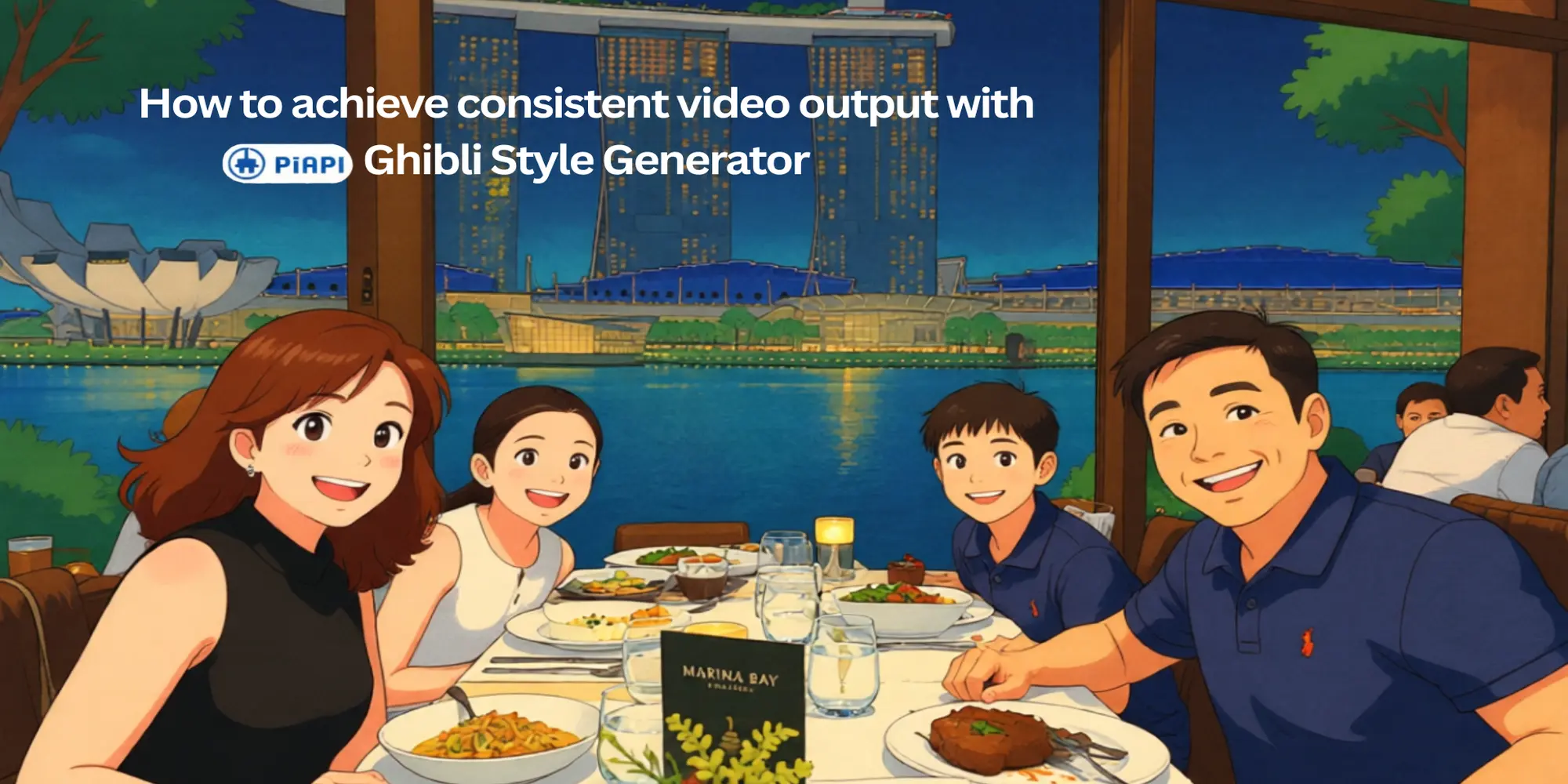

For the qualitative comparison, we focus on the single shot (T2V), multi shot (T2V) and I2V capability of both models. For image-to-video generation, images were generated using our Nano Banana Pro API playground for quality results.

The evaluation framework is adapted from a Labelbox-style T2V assessment and evaluates each output across four dimensions:

1. Prompt adherence

2. Video realism

3. Video resolution

4. Artifacts

Each example uses the same prompt for Kling Video 3.0 API and Kling Video 3.0 Omni API to isolate model behavior rather than prompt variation. Additionally both Kling 3.0 and Kling 3.0 Omni support native sound generation, all videos evaluated in this comparison were generated with audio.

Video Comparison: Kling 3.0 vs Kling 3.0 Omni

Example 1: Single shot (T2V)

We begin with a controlled single-shot generation.

Kling 3.0 Output

Kling 3.0 Omni Output

Prompt: A cinematic shot inside a quiet subway train at night. A young man in a navy jacket sits by the window as city lights streak past outside. The camera starts in a medium-wide shot from across the aisle, then slowly pushes in toward him. As the train moves, subtle reflections of passing lights appear on the window glass and faintly across his face. He turns his head slightly toward the window and exhales softly. The lighting should feel natural and consistent with a moving train environment. No cuts.

Analysis: Both Kling 3.0 and Kling 3.0 Omni followed most scene instructions, including environment, camera push-in, and lighting consistency. However, neither model correctly executed the specified head turn toward the window or the subtle exhale. Both demonstrated high realism, with stable lighting and convincing reflections. Resolution was strong in both outputs, and no visible artifacts were observed. Overall, performance was comparable, with minor action-level deviations in prompt adherence.

Example 2: Multi shot (T2V)

Next we test a structured multi-shot sequence, the feature that sets the Kling 3 model series apart from other Kling models.

Kling 3.0 Output

Kling 3.0 Omni Output

Prompt 1: A detective in a grey suit examines a small metal key under warm desk lighting in a dim office.

Prompt 2: The camera cuts to a medium shot of him standing near a window as rain hits the glass behind him.

Prompt 3: Close-up of his face as he narrows his eyes thoughtfully.

Analysis: Both models followed the multi-shot structure and maintained character consistency across shots. However, although the prompt specified that the window should be behind the detective, both outputs positioned him facing the window, indicating a spatial misinterpretation. Realism and resolution were strong in both cases, with stable lighting and facial detail. Kling 3.0 showed no visible artifacts, while Kling 3.0 Omni exhibited a brief, slight distortion of the key in the first shot. Overall, both performed similarly, with minor spatial deviation and a small object-level artifact observed in the Omni output.

Example 3: I2V

To assess identity consistency, we tested the I2V generation capability.

We followed the prompt structure from our Kling AI API documentation of referencing using @image_1, the city at night and @image_2, the woman in white blazer.

Kling 3.0 Output

Kling 3.0 Omni Output

Prompt: Using the provided reference images, generate a video of the woman from @image_2 walking confidently through the environment shown in @image_1. The lighting, atmosphere, and color tone of the street must match @image_1, while preserving the facial features, hairstyle, and clothing details of @image_2. The camera slowly tracks forward toward her as she walks. No cuts.

Analysis: Kling 3.0 AI failed to follow the reference-based prompt and did not meaningfully incorporate the provided images, generating only a generic walking scene. Kling 3.0 Omni, by contrast, preserved subject and environmental consistency from the reference images but reversed the intended camera movement. Kling 3.0 showed fair realism in motion physics, while Kling 3.0 Omni demonstrated stronger overall realism and integration. Resolution was good in both outputs, and no clear artifacts were observed. Overall, Kling 3.0 Omni showed significantly stronger reference adherence, while Kling 3.0 struggled with image-guided generation.

Closing Thoughts On Kling 3.0 vs Kling 3.0 Omni

Across the three controlled tests, Kling 3.0 and Kling 3.0 Omni showed largely comparable performance in standard text-to-video generation. In both single-shot and multi-shot scenarios, the models delivered strong realism, high resolution, and minimal artifacts, with only minor deviations in spatial interpretation and fine-grained action adherence.

The clearest difference appeared in the image-to-video evaluation. Kling 3.0 Omni preserved reference consistency and environmental integration effectively, while Kling 3.0 struggled to meaningfully incorporate the provided reference images. This suggests that, despite overlapping API workflows, architectural differences may affect how reference-based inputs are interpreted.

Overall, when video reference is not used, Kling 3.0 API and Kling 3.0 Omni API perform similarly in standard T2V tasks. However, Kling 3.0 Omni shows stronger reliability in reference-guided generation, indicating more robust multimodal alignment under image-constrained conditions. For text-driven workflows, both models offer comparable quality, but for stronger reference consistency, Kling 3.0 Omni may provide a more stable foundation.

Start testing both models and get your Kling API key via PiAPI today!

Unlock the power of 20+ AI models with PiAPI — image, video, chat, music, and more. Sign up today and start building smarter, faster and at scale.